Reader's Digest – 19 November 2021

Our weekly review of articles on terrorist and violent extremist use of the internet, counterterrorism, digital rights, and tech policy.

Webinar Alert!

- On Tuesday, 23 November, we will be hosting our next webinar in partnership with the GIFCT, on “Countering terrorist use of the internet, moderating online content and safeguarding human rights”. You can register for the event here. Please stay tuned for upcoming agenda details.

Tech Against Terrorism Updates

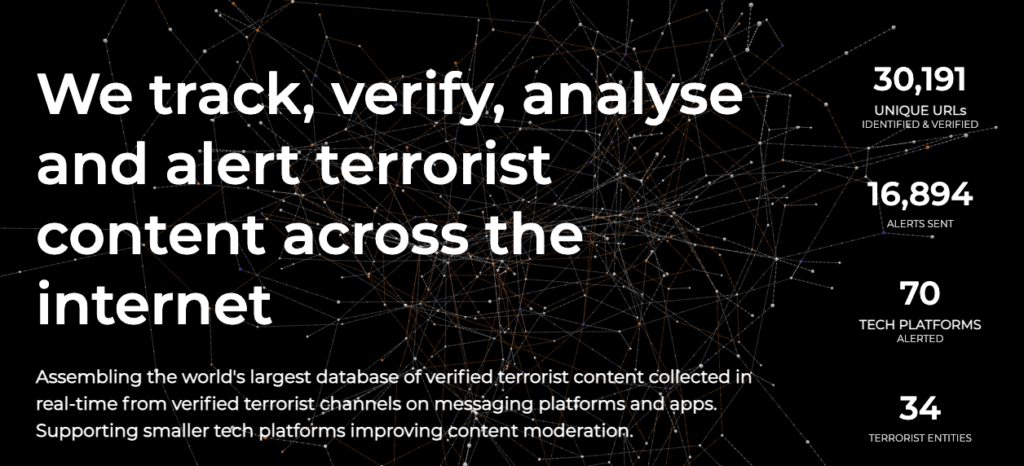

- Our Terrorist Content Analytics Platform (TCAP) has officially sent 10,000+ alerts, flagging verified terrorist content to 64 tech platforms.

- Our founder, Adam Hadley, spoke at the Australian Senate Inquiry about the Abhorrent Violent Material Act. His statement focused on the use of small platforms by terrorist actors, adversarial shift of terrorist actors migrating away from large and more moderated platforms, and extra-territorial implications of the proposed legislation.

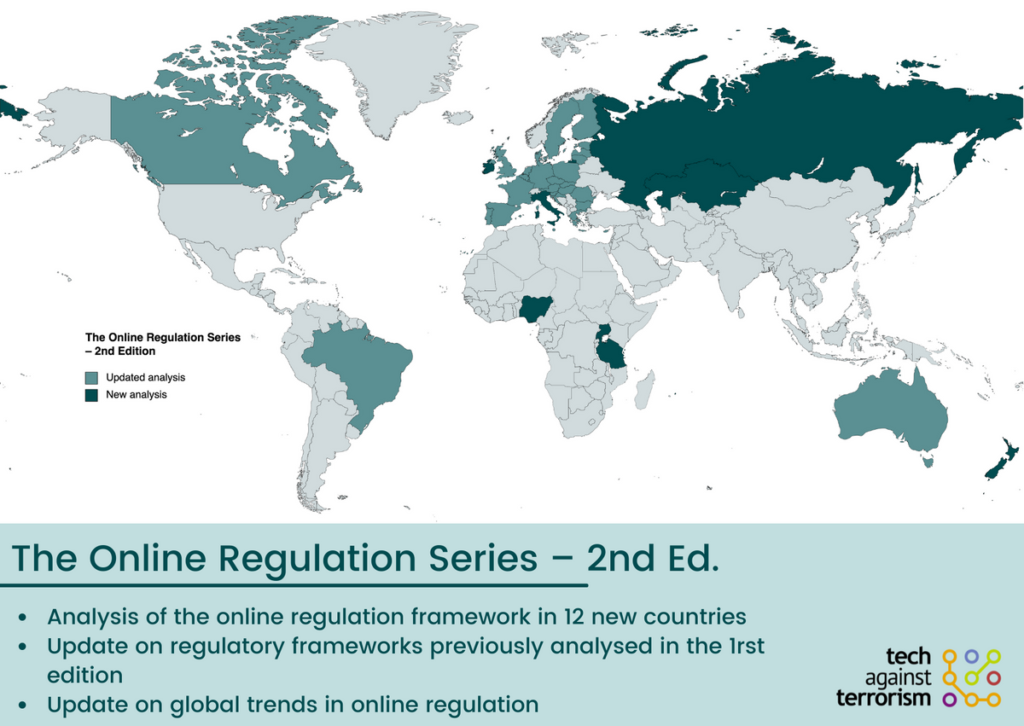

Online Regulation Series 2.0

The Online Regulation Series is back for a 2nd edition. This week, we have published blog posts on

Keep an eye out next week for

- Mauritius

- Tanzania

- Canada

To read all the country analyses from the 1st Edition of the ORS, please see our dedicated Online Regulation Series Handbook. With this Handbook, we provide a state of play of online regulation and share practical insights for tech companies to improve their understanding of the complex and fast changing global regulatory landscape.

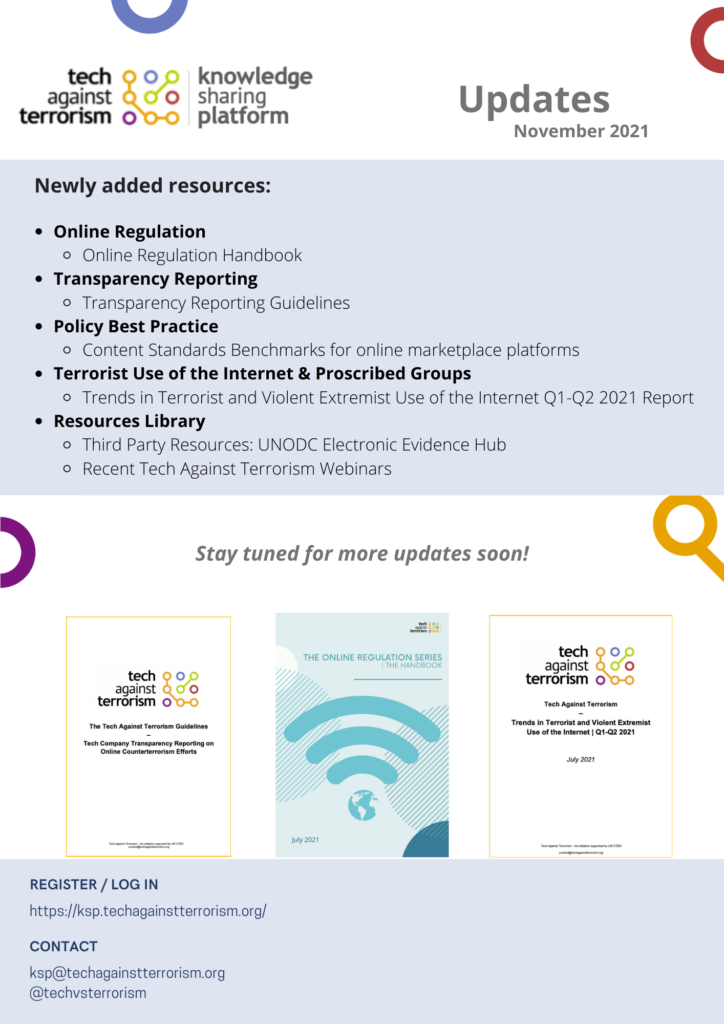

Knowledge Sharing Platform (KSP)

Over the past month, we have updated the Knowledge Sharing Platform, adding to the following sections:

- Online Regulation:

We have added our recently published Online Regulation Series Handbook, which provides an analysis of global online regulation, analysing over 60 legislations and regulatory proposals in 17 countries, and their implications for countering terrorist and violent extremist content. Throughout November and December 2021, we will continue updating this section on a regular basis to include the latest country analyses of the 2nd Edition of the Online Regulation Series.

- Transparency Reporting:

We have added to our Key Recommendations and Guidelines for Transparency Reporting page to include the Tech Against Terrorism Guidelines on Transparency Reporting on Online Counterterrorism Efforts, for tech platforms and governments.

- Policy Best Practice:

We have added to the Content Standards Benchmarks page to include an additional benchmarking table for online marketplace platforms.

- Terrorist Use of the Internet & Proscribed Groups:

We have added to the Terrorist Use of the Internet page to include our recently published "Trends in Terrorist and Violent Extremist Use of the Internet Q1-Q2 2021" report.

- Resources Library:

We have added to our Third Party Resources page, to include the UNODC Electronic Evidence Hub and some of its key example resources and tools, such as the Data Disclosure Framework and the Model Forms on Preservation and Disclosure of Electronic Data. We have also added to the Tech Against Terrorism Webinars, to include the latest webinars that we have organised together with the Global Internet Forum to Counter Terrorism (GIFCT).

In the coming weeks, we will be reviewing and updating pre-existing resources to ensure that they are accurate and up-to-date. Stay tuned for upcoming communications about the next round of updates!

Top Stories

- A report from the EU's Fundamental Rights Agency has stated that the EU Commission should improve the legal definition of 'terrorism' to give greater legal clarity in criminal law.

- 27 EU ambassadors have approved proposals for a 24-hour deadline for removal of illegal content within the EU Digital Services Act. This proposal will seek to regulate all online services, from social media content to e-commerce.

- On Thursday, 18 November, the UN’s Counter Terrorism Committee and the ISIL (Da’esh) and Al-Qaida Sanctions Committee held a meeting discussing prevention and suppression of terrorism financing.

- Twitter has launched an official Crypto Team to focus on blockchain, crypto, and other decentralised technologies. Twitter stated that crypto aligns with its goals, including creator monetisation and decentralised social media.

- Twitter has suspended its “Trends” section within Ethiopia, stating that “inciting violence or dehumanizing people is against our rules.” They stated that the function was being abused within the region to worsen the ongoing conflict due to the algorithm within the feature amplifying messages of violence.

- UK security minister, Damian Hinds, has stated that more people may have “self-radicalised” online throughout the pandemic, in response to the bombing incident in Liverpool on Sunday, 14 November.

- Research by the Institute of Strategic Dialogue has shown an increase in the use of encrypted and unmoderated social media platforms by far-right extremists within Ireland. You can see our assessment of terrorist and violent extremist use of encryption and related mitigation strategies here.

- The Combating Terrorism Center has published an assessment of the Taliban rule in Afghanistan over the past three months.

Tech Policy

- Belarus announces list of social media sites deemed ‘extremist’: The Belarusian Ministry of Internal Affairs has announced a new list of social media sites and channels which it considers to be “extremist.” This includes tech platforms which offer end-to-end encrypted (E2EE) messaging services, such as Telegram and WhatsApp. Belarusian citizens who subscribe to, contribute to, or own these channels may face up to 7 years in prison under Article 361 of the Belarus Criminal Code. This Article of the Criminal Code covers “harm to the county’s sovereignty” and would allow prosecution under the subsection of “taking part in extremist formations”. The author notes that the amended law is likely a response to the 2020 protests against President Alexander Lukashenko. Those protesting were said to have used numerous tech platforms and channels to coordinate the protests, authorities stated that Telegram was primarily used by the protestors as it offered E2EE messaging. (Mohamedbhai, Jurist, 16.10.2021).

To read more about E2EE, you can read our “Terrorist Use of E2EE: State of Play, Misconceptions, and Mitigation Strategies” report and summary. - Facebook took down a New Mexico militia group’s accounts. Prosecutors say it deleted key evidence: At the June 2020 protests in New Mexico, the members of the New Mexico Civil Guard were accused of fomenting violence and a civil injunction was started to stop them from attending protests as a paramilitary organisation in the future. As part of the case, the prosecutors asked Facebook for evidence from the group’s page and members. However, Facebook had deleted the page and all of its information as part of its content moderation enforcement. The article discusses the ongoing debate between tech platforms’ responsibility in removing content and accounts that violate usage conditions, and the need to preserve information for future use in criminal prosecutions. (Oremus and Timberg, The Washington Post, 15.11.2021).

To read more about Tech Against Terrorism’s work to preserve evidence of war crimes, while ensuring effective content removal, see this article about the TCAP.

For any questions, please get in touch via:

contact@techagainstterrorism.org