Headline news

- In early August, we published a report on the public online consultation process on Terrorist Content Analytics Platform (TCAP). The release of this report is an important step as part of our commitment to ensure that the TCAP is developed in a transparent manner whilst respecting human rights and fundamental freedoms, including freedom of speech. Read the report here to learn more about key findings from this process.

- The overall consultation process is still ongoing in 2020. If you are a tech company, academic researcher, or a representative of civil society, we are interested in speaking with you to solicit expertise to help inform the development of the TCAP. If you are interested, please contact us.

- Tech Against Terrorism is currently working on submitting our feedbacks for the ongoing public consultation on the EU Digital Service Act package and in the Ofcom’s call for evidence on the new UK regulation for video-sharing platform. We recommend all experts and tech platforms to take part in these consultations which re important opportunities to help influence policy-making.You can find Google’s submission on the EU Digital Service Act package here.

- Trend alert: Accelerationism: The newest episode of the Tech Against Terrorism Podcast is here. This time we take a deep-dive into the accelerationist doctrine and its popularity amongst neo-Nazis, together with Professor Matthew Feldman and Ashton Kingdon from the Centre for Analysis of the Radical Right, and Ben Makuch from Vice News. Listen here.

What's up next?

- Our next podcast will cover the online world of Incels. Watch this space for its release!.

- After a summer break, we will be back soon with our e-learning webinar series. More info on our fall schedule to be shared soon!

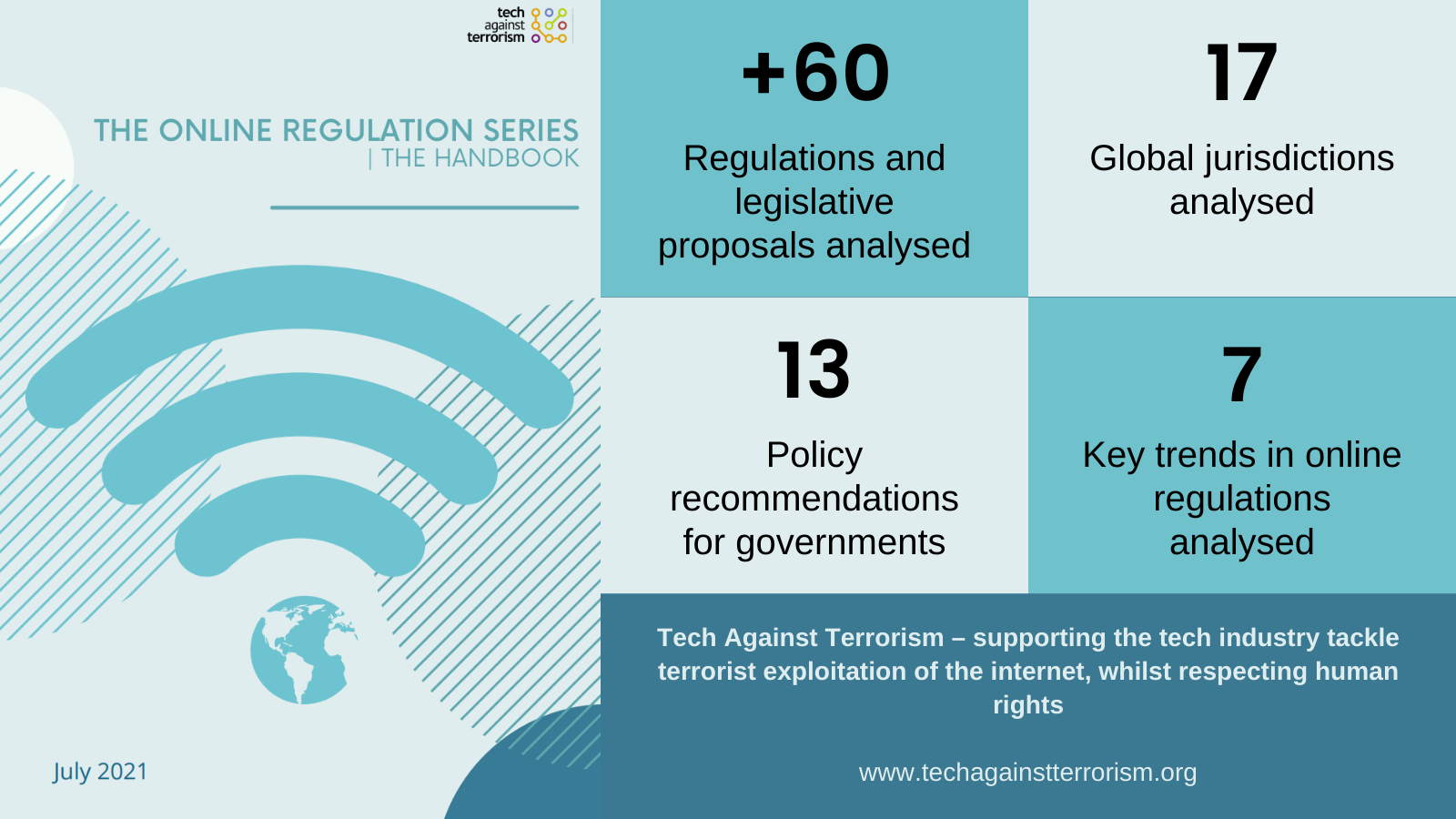

- Mapping global regulation of online terrorist activity: With global regulation of online speech in constant evolution, Tech Against Terrorism is working on a dedicated knowledge-sharing series to report on the state of regulation of terrorist and violent extremist content online, and what it means for tech platforms.

Are you an expert in online regulation or have resources you would like to share? Get in touch at contact@techagainstterrorism.org. - TCAP office hours: To help answer your questions about the Terrorist Content Analytics Platform, we will shortly release a schedule of monthly “office hours” open to our stakeholders to share news about the latest updates around development and policy. We will share more information about this shortly.

- Mitigation strategy – online Assessment for tech platforms: We are working on updating our online assessment for tech platforms to ensure that we can provide platforms with the most accurate understanding of their strengths and weaknesses in responding to terrorist exploitation. This assessment is central to the Tech Against Terrorism Membership and to the Mentorship Programme we implement on behalf of the GIFCT, and is designed to provide tech platforms with an indication of the effectiveness of their current mitigation processes against terrorist exploitation.

Don't forget to follow us on Twitter to be the first to know when a webinar is announced, or a podcast is released!

Tech Against Terrorism Reader's Digest – 4 September

Our weekly review of articles on terrorist and violent extremist use of the internet, counterterrorism, digital rights, and tech policy.

Top stories:

- GNET has published its first report, presenting its newly developed analytical tool, which relies on text analysis to assist and complement technology driven moderation and the removal of violent extremist material by online platforms at scale.

- The Center for Analysis of the Radical Right (CARR) has launched a new podcast series called Right Rising. In the inaugural episode, this podcast series will discuss "right wing authoritarian and extremist movements" worldwide.

- On Wednesday, the US Department of Justice has announced the seizure of two terrorist operated websites operated by Kata’ib Hizballah, a specially designated national and a foreign terrorist organisation in the US. Our tweet on this development here.

- This week marks the beginning of the trial of the January 2015 Charlie Hebdo, Hyper Casher, and Montrouge terrorist attacks in France. The trial is set to last until early November and see 150 witnesses and experts provide testimonies and evidence.

- Last week, the Christchurch attacker was sentenced to life imprisonment without parole. Following this, New Zealand Prime Minister Jacinda Arden announced his designation as a terrorist entity under the country’s Terrorism Suppression Acts.

- The Swedish Defence University has released a report on the “radical nationalist” environment in Sweden, covering movements spanning from neo-Nazi groups to the Swedish alt-right. An English summary of the report can be found here, and the full report (in Swedish) here. Earlier this year, we covered Swedish neo-Nazi groups and their online activity in one of our podcast episodes – have a listen here.

Tech policy

- Content moderation best practices for startups: Taylor Rhyne reminds us that content moderation is not a problem limited to large and global platforms but concerns all platforms, including the smallest ones given the detrimental effect that a small number of “toxic members” can have. For Rhyne, content moderation is “a first-class engineering problem that calls for a first-class solution”, especially via accessible and flexible policy that englobe the variety of content that moderators deal with on a daily basis, and “the shades of grey” of these content; but also via technology that can underpin these policies and render them affordable and effective. To support the development of content moderation practices amongst platforms of all sizes, Rhyne completes this call for a “first-class solution” with a comprehensive listing of content moderation best practices. These best practices cover different considerations related to content moderation, including content policies, harassment and abuse detection, moderation actions. and measurement. (Rhyne, TechDirt, 02.09.2020)

- Our mission at Tech Against Terrorism is to support such small platforms in countering terrorist exploitation whilst safeguarding human rights, including via robust and transparent content moderation mechanisms. If you are interested in getting support for your platform, or would like to know more about how we support smaller platforms, you can reach out at contact@techagainstterrorism.org

On this topic we are also listening to: The Lawfare Podcast: Alissa Starzak on Cloudflare, Content Moderation and the Internet Stack (Lawfare, 03.09.2020)

Islamist terrorism

- The Islamic State's gendered recruitment tactics:Nethra Palepu and Rutvi Zamre analyse how Islamic State (IS) online magazines inspired women to join the group. With IS magazines having dedicated sections aimed at women, Palepu and Zamre emphasise how these sections differ from recruitment and propaganda targeted at men. Whilst those targeting men are focused on “violence and military conquests”, those aimed at women have dedicated sections focussing on the “duty” to give birth and raise the next generation of fighters, but also on the idea of belonging and empowerment. Palepu and Zamre’s analysis shows that IS played on the lack of opportunities women face in many societies and leveraged the notion of female empowerment: “[d]espite the group being blatantly misogynistic, they promise women an ideal life of power and privilege that otherwise seems unattainable.” The authors then analyse the shifting role women are playing in IS, and their more active involvement in combat activities in recent years. In doing so, they call for counterterrorism strategies to be broadened and to consider the pull-factors used by terrorist groups to recruit women. (Palepu and Zamre, ORF, 29.08.2020)

- The Islamic State's strategic trajectory in Africa: In this article, Tomasz Robiecki, Pieter van Ostaeyen, and Charlie Winter analyse the geographical, tactical, and targeting of attacks of Islamic State’s franchise model in Africa by identifying key hotspots, emerging strongholds, and potential future threats. They do so by analysing Islamic State’s al-Naba magazine and news outlet Nashir News Agency outputs between 2018-2020. The authors find that African Wilayat (provinces) can no longer be considered a “sideshow” to central command by IS in Iraq and Syria. Instead they argue that IS is becoming increasingly dependent on their African provinces’ operations. In addition, they show how the Islamic State in West Africa Province (ISWAP) is the deadliest province, and that its mode of attack is similar to that of IS in Iraq and Syria at the onset of the caliphate in 2014. The authors conclude that the increasing lethality of IS’ African branches prove how IS is far from defeated. (Robiecki, van Ostaeyen and Winter, CTC Sentinel, August 2020)

Counterterrorism

- Technology and extremism in the women, peace, and security agenda: Dr Alexis Henshaw dwells on the relationship of technology to the Women, Peace and Security Agenda (WPS). Indeed, as this year marks the 20-year anniversary of UN Security Council Resolution 1325 – the backbone of the WPS which introduced gender and sexual violence consideration in works on conflict and conflict resolution, including in counterterrorism programme – Henshaw reflects on what the WPS should consider for the next decade. In particular, she underlines that, whilst providing new opportunities, technology “has posed new and gendered challenges related to peace and security,” and calls for incorporating such challenges into the broader WSP framework. Mainly, she argues that the “next-generation approach WPS” should consider the global gender gap in internet access and use of internet technologies(“the digital divide”), online speech and extremism, privacy and surveillance, as well as the nexus of technology and crime. In doing so, she highlights the role that multistakeholder initiatives can have in bringing together the tech sector, governments, researchers, and civil society to tackle these gendered challenges. (Henshaw, GNET, 01.09.2020)

- Exposure to online terrorist content: stresses and strategies: Dr Zoey Reeve draws attention to the mental health and well-being of those repeatedly and extensively exposed to online terrorist content through their work – for instance tech companies moderators and law enforcement case officers. Reeve focuses on the UK Counterterrorism Internet Referral Unit (CTIRU) – a unit set up by the UK government to facilitate removal of terrorist material online. The study provides insights into how repeated exposure is handled by CTIRU officers, and aims at building a toolkit for managing such exposure. Reeve divides this toolkit into two parts: building awareness and building resilience. The first part of the toolkit is meant to draw attention to the mental health challenges linked to extensive exposure to terrorist material – from understanding what material may be most harmful to risk and buffer factors, and how professional support can help safeguard mental health. The latter focuses on the mitigation strategies deployed to lessen the effects of exposure. Here, Reeve stresses the important role that the establishment of a community of individuals can play to share experiences and best practices. (Reeve, VoxPol, 02.09.2020)

- Last year, Tech Against Terrorism welcomed Dr Zoey Reeve in an expert panel on Mental Health and Content Moderation for our webinar series, in collaboration with the GIFCT. If you wish to access a recording of this webinar, please send us an email at contact@techagainstterrorism.org

For any questions or media requests, please get in touch via:

contact@techagainstterrorism.org

Background to Tech Against Terrorism

Tech Against Terrorism is an initiative supporting the global technology sector in responding to terrorist use of the internet whilst respecting human rights, and we work to promote public-private partnerships to mitigate this threat. Our research shows that terrorist groups - both jihadist and far-right terrorists - consistently exploit smaller tech platforms when disseminating propaganda. At Tech Against Terrorism, our mission is to support smaller tech companies in tackling this threat whilst respecting human rights and to provide companies with practical tools to facilitate this process. As a public-private partnership, the initiative works with the United Nations Counter Terrorism Executive Directorate (UN CTED) and has been supported by the Global Internet Forum to Counter Terrorism (GIFCT) and the governments of Spain, Switzerland, the Republic of Korea, and Canada.

- News (240)

- Counterterrorism (54)

- Analysis (52)

- Terrorism (39)

- Online Regulation (38)

- Violent Extremist (36)

- Regulation (33)

- Tech Responses (33)

- Europe (31)

- Government Regulation (27)

- Academia (25)

- GIFCT (22)

- UK (22)

- Press Release (21)

- Reports (21)

- US (20)

- USA (19)

- Guides (17)

- Law (16)

- UN (15)

- Asia (11)

- ISIS (11)

- Workshop (11)

- Presentation (10)

- MENA (9)

- Fintech (6)

- Threat Intelligence (5)

- Webinar (5)

- Propaganda (3)

- Region (3)

- Submissions (3)

- Generative AI (1)

- Op-ed (1)